Consequently, statistical mechanics should be seen just as a particular application of a general tool of logical inference and information theory.

He argued that the entropy of statistical mechanics and the information entropy of information theory are basically the same thing. In particular, Jaynes offered a new and very general rationale why the Gibbsian method of statistical mechanics works. Jaynes in two papers in 1957 where he emphasized a natural correspondence between statistical mechanics and information theory.

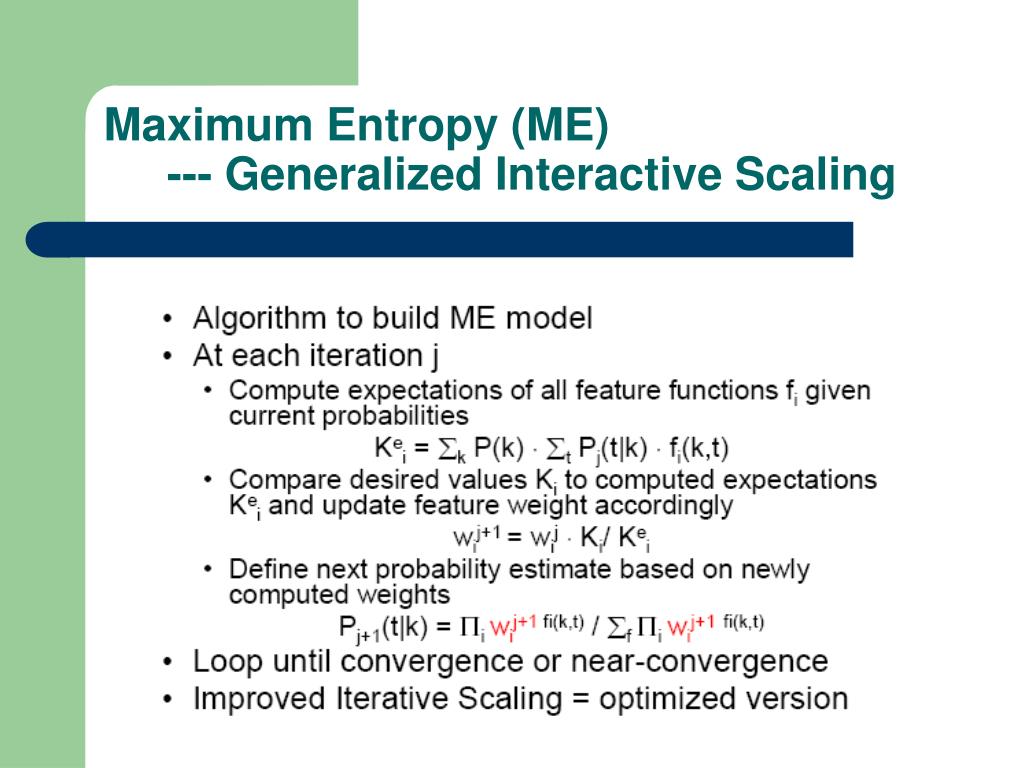

According to this principle, the distribution with maximal information entropy is the best choice. Consider the set of all trial probability distributions that would encode the prior data. The principle of maximum entropy states that the probability distribution which best represents the current state of knowledge about a system is the one with largest entropy, in the context of precisely stated prior data (such as a proposition that expresses testable information).Īnother way of stating this: Take precisely stated prior data or testable information about a probability distribution function.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed